Services

Highlights

- Agentic artificial intelligence (AI) demands a robust governance foundation due to risks associated autonomous decision making, security and privacy concerns, data integration barriers, resource intensity, and evolving regulatory expectations.

- A unified governance model with six core pillars—governance framework, technological guardrails, cross functional collaboration, data governance, AI literacy, and regulatory compliance—provides the structural backbone needed for responsible scaling of agentic AI.

- Continuous monitoring, feedback loops, and change management practices ensure that governance remains adaptive, enabling enterprises to evolve AI systems responsibly as risks, regulations, and operational conditions shift.

On this page

Key principles and challenges

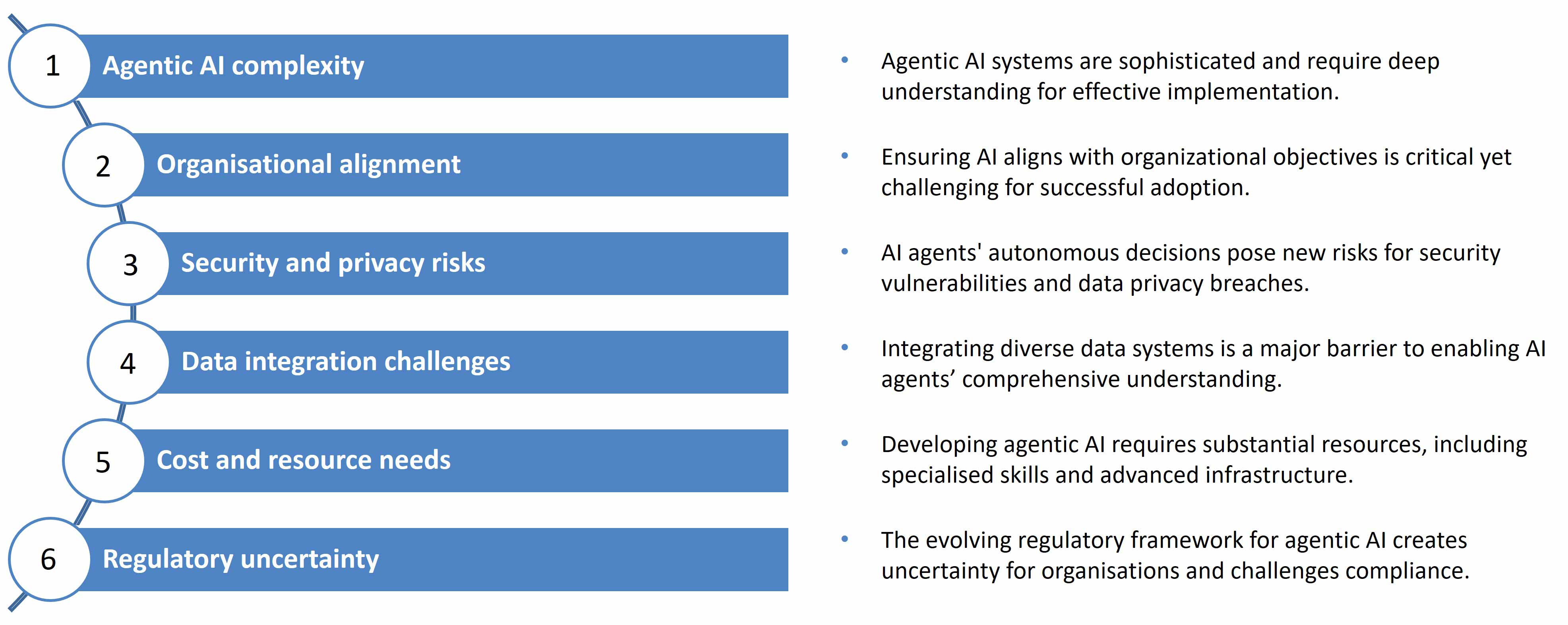

Agentic artificial intelligence (AI) applications are systems capable of autonomous decision making and action. While this autonomy creates significant opportunities, it also introduces new challenges for organisations (see Figure 1).

The key challenges in agentic AI’s adoption include:

- High system complexity requiring deep technical understanding

- Difficulty aligning autonomous behaviour with organisational objectives

- Increased security and data privacy risks from independent decisions

- Data integration barriers due to diverse enterprise systems

- Significant investment in skills, infrastructure, and resources

- Regulatory uncertainty due to evolving compliance requirements

These challenges suggest that governance is not optional, as it is critical for managing complexity, risk, and alignment with organisational objectives. Moreover, regulatory frameworks such as the European Union Artificial Intelligence Act set standards for responsible AI.

Key constituents

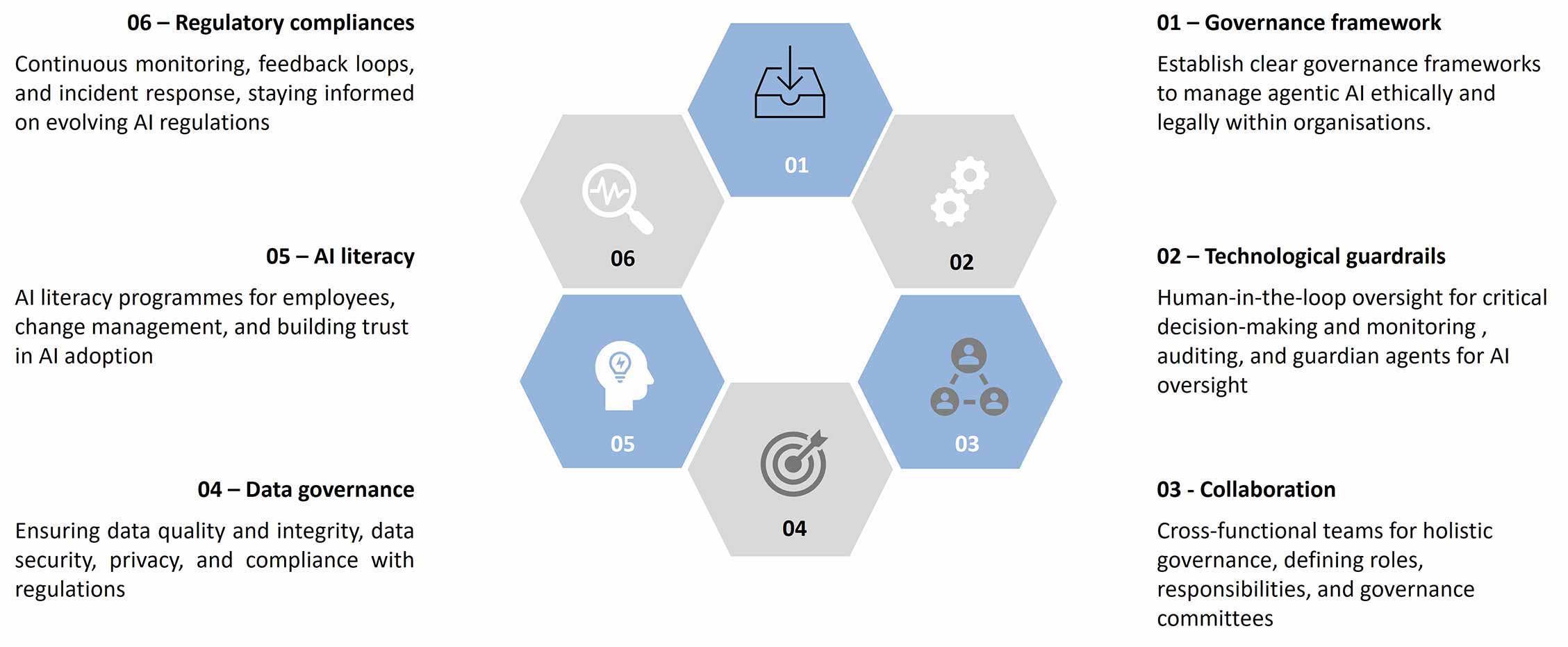

To achieve enterprise grade control, governance for agentic AI should be organised into six key constituents (see Figure 2):

- Governance framework to define ethical and legal boundaries

- Technological guardrails such as human in the loop oversight, monitoring, auditing, and guardian agents

- Cross functional collaboration to define roles, responsibilities, and governance committees

- Data governance to ensure data quality, security, privacy, and compliance

- AI literacy programmes to support change management and build trust in AI

- Regulatory compliance enabled through monitoring, feedback loops, and incident response

Together, these constituents unify policy, controls, people, and compliance into a single operating discipline.

Governance frameworks

Governance frameworks for agentic AI define ethical boundaries and compliance expectations. Ethical principles guide responsible AI behaviour, while adherence to regulations such as the European union artificial intelligence act and General data protection regulation reinforce the commitment to data protection is reinforced.

Governance becomes executable when policies are in formats that AI systems can interpret and follow autonomously, ensuring consistent and compliant behaviour. Risk assessment frameworks identify issues such as bias, security vulnerabilities, and unintended consequences early. Structured evaluation methods assess the severity and likelihood of identified risks, enabling targeted mitigation strategies. Centralised control is established by registering all AI agents, providing real‑time visibility into deployment, usage, and risk across projects.

Technological guardrails and oversight

A critical component in establishing governance is to implement technological guardrails and oversight mechanisms that ensure human in the loop, monitoring capabilities, and explainability.

Human-in-the-loop

- HITL systems ensure human oversight in AI-driven critical decisions to enhance safety and reliability.

- Guardrails that allow human intervention in high-risk AI applications helps prevent errors.

Monitoring

- Tools continuously track AI decisions, inputs, and outputs to ensure transparency and auditability.

- AI agents supervise other AI systems to detect anomalies and policy deviations effectively.

- Oversight mechanisms enable continuous monitoring and control of AI for responsible decision‑making.

Explainability

- Limiting AI agent autonomy reduces risks of unintended or harmful behaviours for safer operation.

- Using models like SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) that justify decisions improves trust and accountability in AI systems.

Together, these mechanisms make AI behavior at an enterprise scale safe.

Operating model

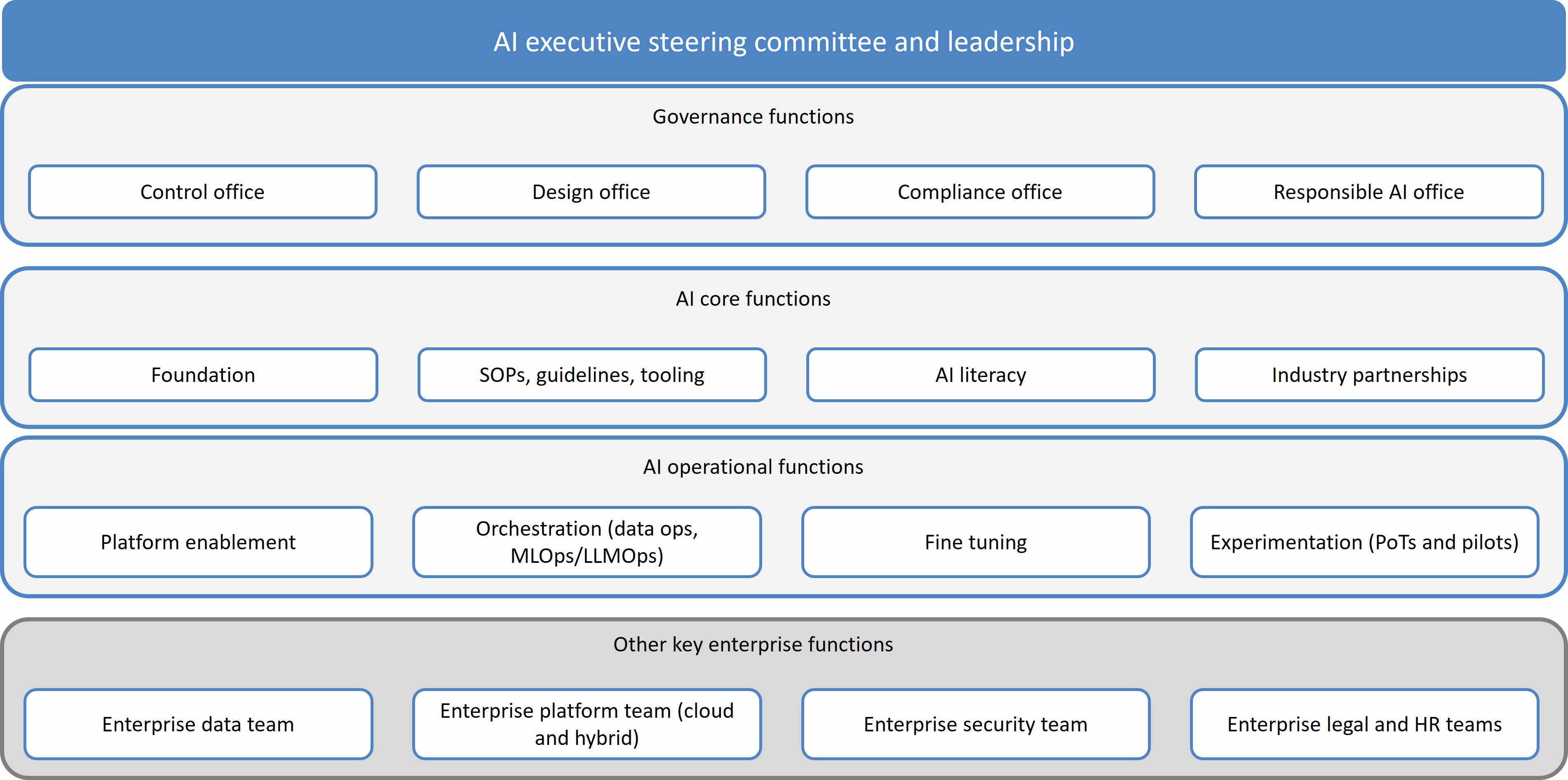

An enterprise AI office is recommended for effective governance (see Figure 3). The office will have an AI executive steering committee and leadership. It will organise governance functions through the control, design, compliance, and responsible AI offices. In addition to governance functions, AI core functions will provide the foundation elements such as standard operating procedures (SOPs), guidelines, tooling, AI literacy, and industry partnerships. AI operational functions will support execution, covering platform enablement, orchestration (data ops, MLOps/LLMOps), fine tuning, and experimentation through proof of technology and pilots. AI governing bodies then define decision pathways and oversight responsibilities.

The above-mentioned structure has the following responsibilities:

- The executive steering committee sponsors the programme and invests in people, processes, and infrastructure.

- The control office evaluates and approves AI ideas for proof of concept, minimum viable product, and production, and drives periodic AI inventory review and risk cycles.

- The responsible AI office defines practices aligned with the organisation’s responsible AI principles, and other recognised industry and regulatory frameworks.

- The compliance office ensures regulatory compliance and tracks updates.

- The design office reviews key designs and authorises changes while ensuring adherence to best practices and information security standards.

Trust and improvement

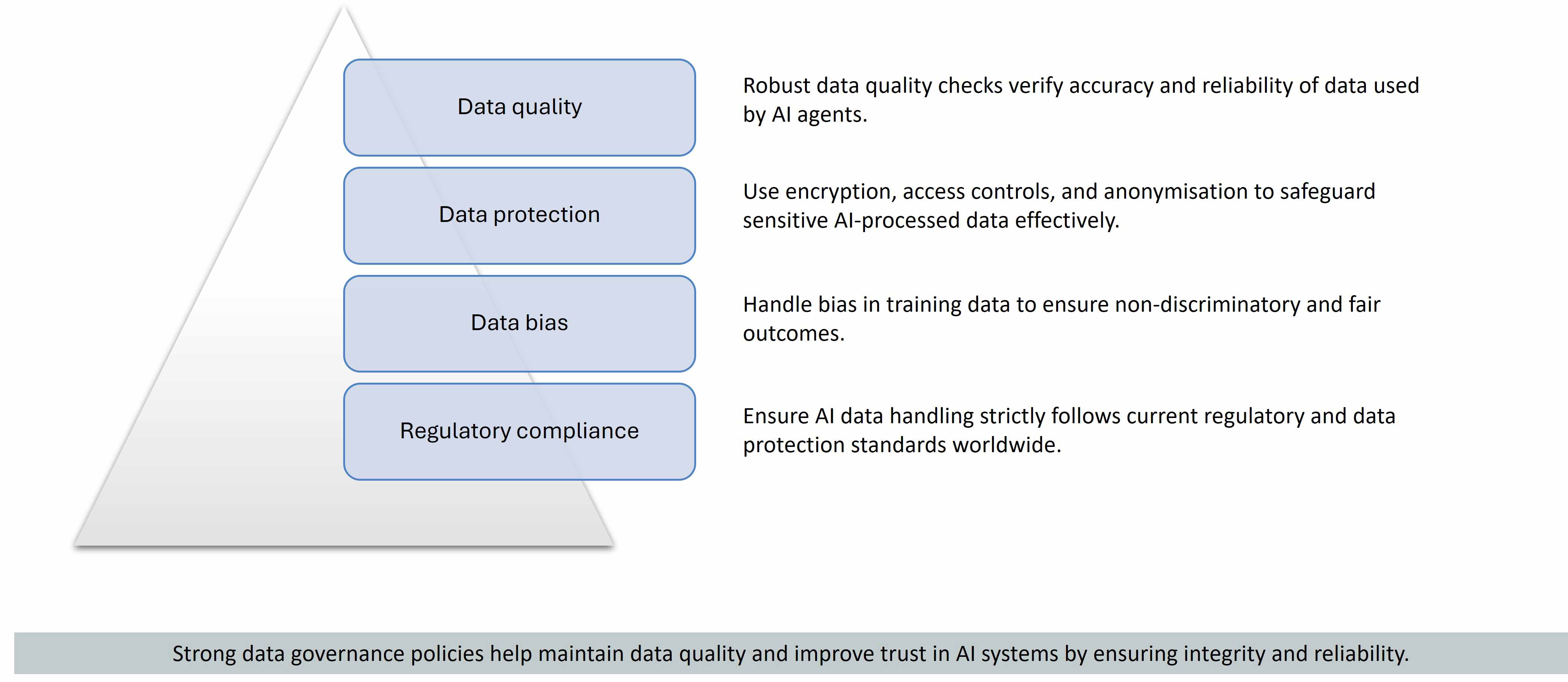

Trust is essential for user acceptance of agentic AI and is built through responsible implementation, governance, literacy, and continuous improvement. A key foundation of trust is strong data governance, which ensures that AI systems operate with integrity, fairness, and compliance.

Data governance for agentic AI is anchored on four core pillars: data quality, data protection, data bias management, and regulatory compliance (see Figure 4). Robust data quality checks ensure the accuracy and reliability of data used by AI agents. Data protection measures such as encryption, access controls, and anonymisation safeguard sensitive information. Addressing bias in training data supports fair and non‑discriminatory outcomes, while regulatory compliance ensures adherence to global data protection standards.

In addition, AI literacy programmes and effective change management address risks such as unclear AI strategy, workforce readiness, and non‑transparent decision‑making. Continuous improvement further reinforces trust through monitoring, feedback loops, incident response, and ongoing regulatory awareness.

Implementation guidelines

The success of agentic AI governance depends on strong implementation of security, privacy, and monitoring controls. A default ‘read first, write rarely’ approach restricts high risk operations such as unrestricted data definition language actions, cross tenant access, and direct exposure to personally identifiable information.

A multi layer security model based on least privilege role based and attribute based access control includes:

- Authentication and role verification

- Policy governed tool access

- Segregation of execution layers

- Auditability and agent registry governance

Enterprise grade monitoring provides end to end visibility across the agent lifecycle, enabling safe and compliant scaling of agentic AI.