Services

Highlights

- Unified AI‑driven fraud detection delivers real‑time, high‑accuracy monitoring across all banking transactions, ensuring proactive risk mitigation and regulatory compliance.

- Multilayered analytics using Isolation Forest, XGBoost, and GNNs capture complex behavioral and relational fraud patterns, reducing false positives and improving investigator efficiency.

- Explainable GenAI assistants and dashboards provide clear reasoning behind alerts, accelerate case disposition, and continuously refine fraud‑detection performance.

On this page

Hybrid AI‑ML fraud detection model

Proactive fraud detection in today’s digital ecosystem requires moving beyond static rules to dynamic, artificial intelligence (AI)‑driven approaches that identify and monitor suspicious transactions at scale. The model described here focuses on banking transactions approved by the institution, applying discriminative and predictive AI, machine earning (ML), and generative AI (GenAI) to assign risk scores that guide investigators. It leverages diverse data sources (eg, transactional, demographic, personal, know your customer (KYC), anti–money laundering (AML), fraud management) and transaction attributes to surface anomalies. It,then operationalises rules for real‑time decisions.

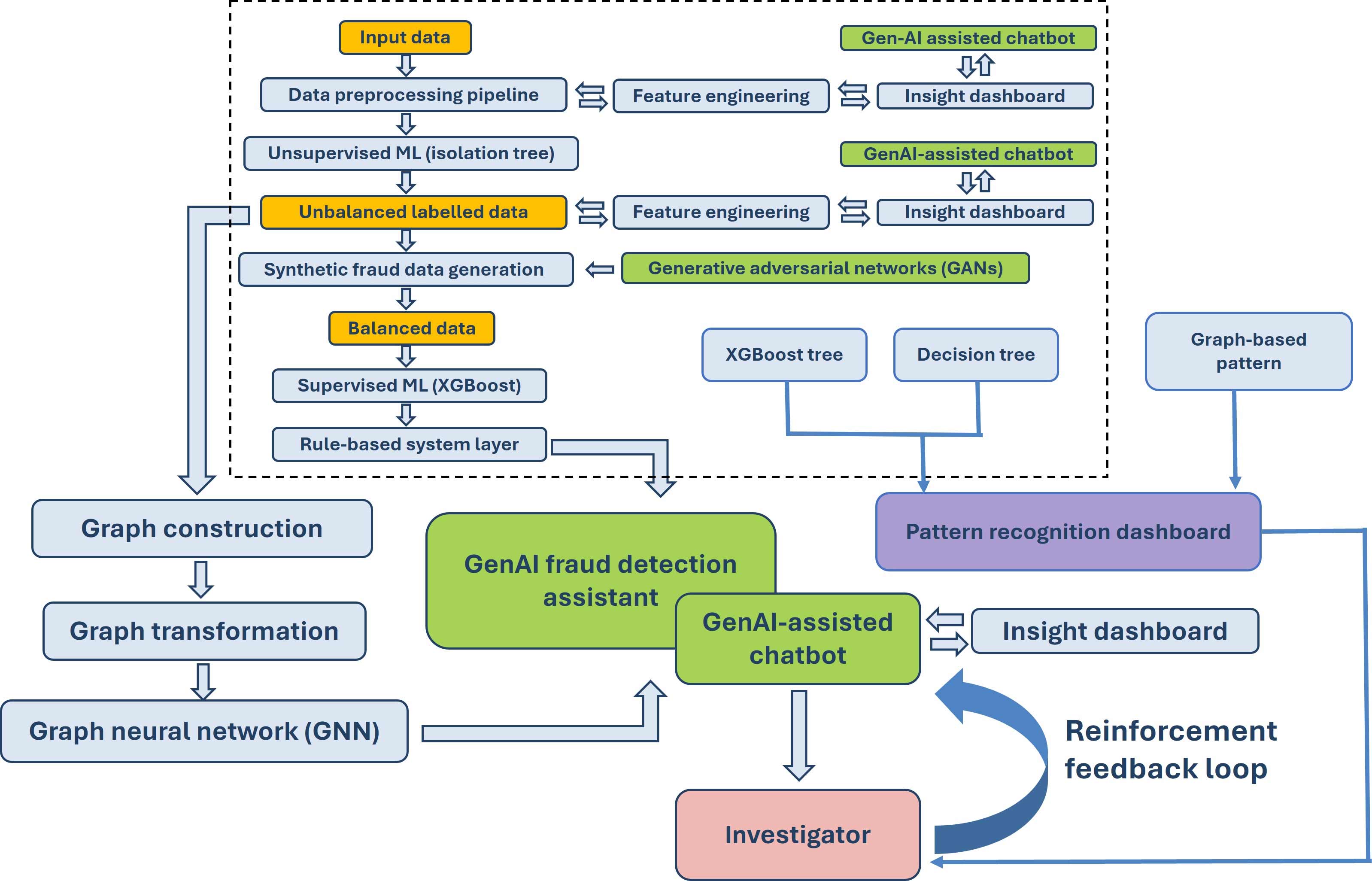

A hybrid workflow integrates data processing, anomaly detection (Isolation Forest), rule development (XGBoost), and logistic regression for implementable coefficients and thresholds, flagging transactions that exceed defined suspicion probabilities. Complementary architectures include a hybrid pipeline and a graph neural network variant enhanced by GAN‑generated synthetic fraud data, insight dashboards, and GenAI chatbots to support investigators and strengthen compliance objectives.

Objectives of the framework

Fraud detection aims to identify and monitor potentially fraudulent transactions across bank‑approved activity while producing risk scores that prioritise investigations and drive timely decisions. The approach moves beyond static rule sets by employing AI, ML, and GenAI techniques that adapt to evolving schemes and operate continuously.

Key objectives include:

- Dynamic detection: Replace static rules with adaptive AI-ML models for evolving fraud patterns

- Risk scoring: Assign transaction‑level scores to prioritise investigations effectively

- Operational integration: Translate model insights into actionable rules embedded in banking systems

- Scalable monitoring: Ensure processes handle high transaction volumes without compromising accuracy

- Rapid decisioning: Use predictive and discriminative techniques for timely alerts and auditability

By coupling detection with monitoring, organisations maintain vigilance, reduce manual burden, and improve triage quality across high‑volume environments.

Key data sources

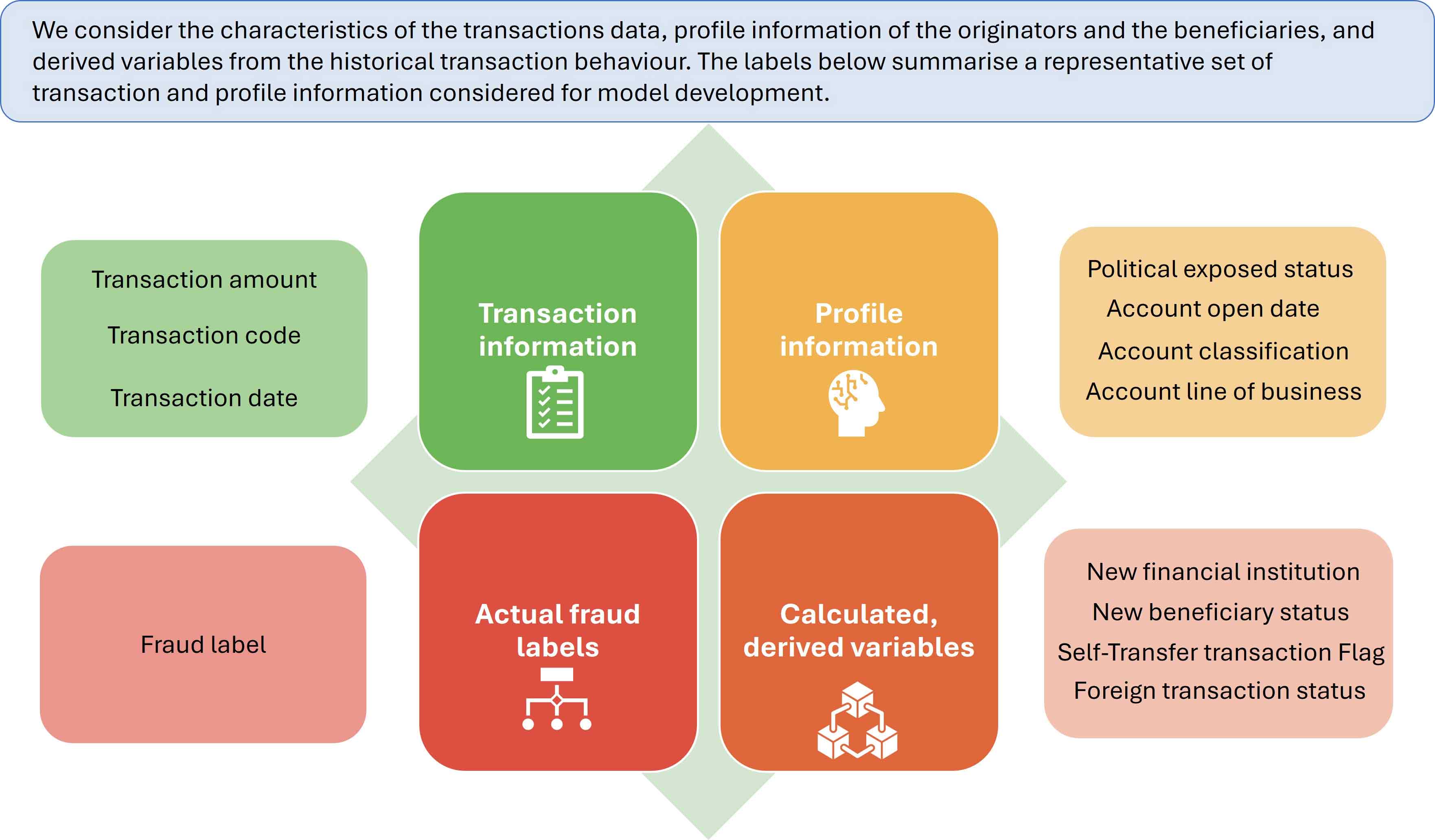

The model’s scope covers all ongoing transactions with bank approval, integrating data from multiple sources such as know your customer (KYC), anti-money laundering (AML), and fraud management systems. Transaction features include amounts, codes, dates, and fraud labels, complemented by profile attributes like political exposure status, account open date, classification, and line of business (see Figure 1).

Behavioural variables derive from historical patterns (eg, beneficiary counts, activity days, foreign transfer status, self‑transfer flags) to enrich signal strength. This breadth of input supports both anomaly detection and rule creation, improving the model’s capacity to capture nuanced behaviours across customers and beneficiaries. The comprehensive data foundation ensures coverage of legitimate and suspicious activities, enabling downstream algorithms to isolate outliers, define decision paths, and generate implementable coefficients for thresholding and risk scoring.

Model impact, business benefits, and fraud‑risk coverage

The AI‑driven fraud detection solution enhances institutional oversight by improving monitoring accuracy, reducing manual investigative effort, and reinforcing compliance with AML, Federal Communications Commission (FCC), Financial Crimes Enforcement Network (FinCEN), Financial Conduct Authority (FCA), and central bank regulatory expectations. It uses anomaly detection, supervised rule discovery, and explainable GenAI to assign transaction‑level risk scores, enabling faster triage and stronger operational control.

Business benefits:

- Real‑time risk identification, allowing immediate intervention and potential loss prevention

- Lower false positives through ensemble AI models that reduce noise and increase investigator productivity

- Explainable insights via a GenAI assistant that interprets model decisions and captures analyst feedback for continuous improvement

- Expanded pattern detection using graph‑ and tree‑based analytics to identify collusion, layering, and rapid transaction bursts that traditional rules miss

Fraud risks covered:

- Synthetic identity fraud, where fraudsters blend real and fabricated data

- Collusive and network‑based rings are detectable through relational and graph analysis

- Money‑laundering paths spread across multiple entities

- Phishing‑driven unauthorised transfers requiring real‑time alerting

- High false‑positive rates inherent in legacy rule‑based engines

This layered approach increases fraud resilience, improves operational efficiency, and strengthens the institution’s ability to respond to evolving threats.

Conceptual flow of the hybrid fraud detection approach

The hybrid fraud detection model begins with at least 18 months of transactional data, ensuring sufficient historical context for pattern identification. Data processing aggregates key attributes such as beneficiary accounts, transaction amounts, political exposure status, and demographic indicators. Derived variables from historical behaviour enhance signal strength for anomaly detection.

Anomaly detection employs Isolation Forest, an unsupervised algorithm that isolates outliers by leveraging their ease of separation from normal observations. Unlike one-dimensional methods, Isolation Forest handles multidimensional data effectively, capturing diverse transaction characteristics. Hyperparameters, such as tree count and feature selection, are tuned using actual fraud labels to maximise detection accuracy. This approach flags anomalies like high-value transfers to unexpected destinations, forming the foundation for subsequent rule development and implementation.

Rule development and implementation

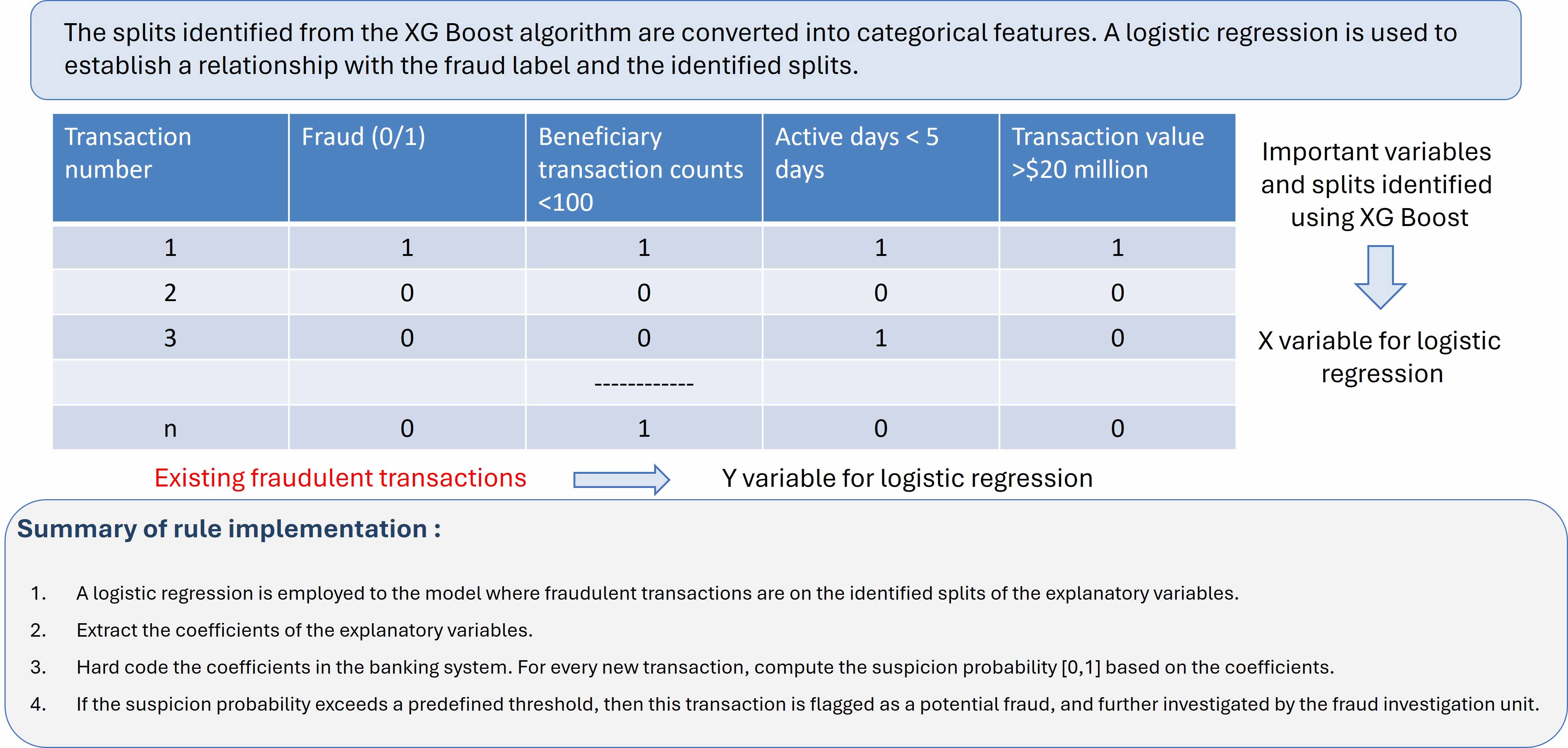

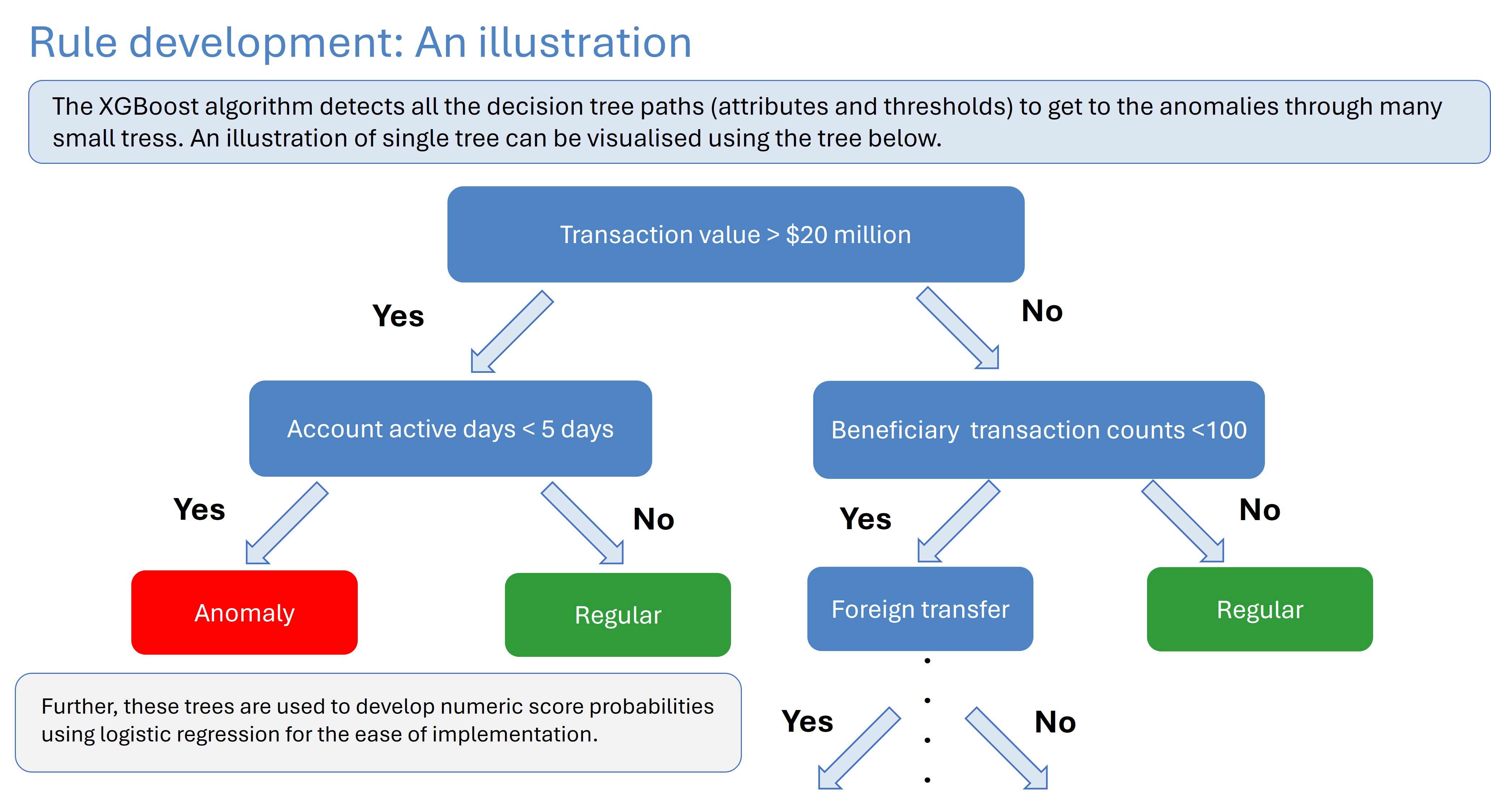

Rules emerge from XGBoost‑identified splits that map attributes and thresholds to anomaly labels across many small trees. These decision paths are transformed into categorical features (0/1), and logistic regression establishes relationships with the fraud label, yielding coefficients used to compute suspicion probabilities for incoming transactions (see Figure 2).

A transaction is flagged when its probability exceeds a predefined threshold, prompting investigation. Illustrative paths include combinations such as transaction value greater than $20 million, days the account has been active being fewer than five, and beneficiary counts under 100, with foreign transfer behaviour as an additional separator (see Figure 3).

This pipeline ensures transparent, auditable rules that translate model evidence into operational controls within banking platforms, supporting consistent triage and monitoring.

Pattern recognition approach using decision trees, XGBoost, and GNN

Pattern recognition is a process of identifying recurring, suspicious behavioural and relational patterns in transaction data that indicate fraudulent activity. It is used to flag transactions in unusual times, money laundering paths, and multiple transactions in short period of time, among other activities that look suspicious (see Figure 4).

Core techniques include:

- Decision tree: Interpretable ‘IF‑THEN’ rules for non‑linear and conditional relationships.

- XGBoost: Identifies recurring features in fraudulent transactions for pattern discovery.

- Graph neural network (GNN): Constructs graphs with nodes (accounts, merchants, customers) and edges (transactions) to learn suspicious patterns.

Applications of pattern recognition:

- Detecting unusual transaction timing and frequency

- Identifying laundering paths across multiple entities

- Flagging rapid transaction bursts that are indicative of collusion

Combined with anomaly detection, pattern recognition delivers a layered defense that adapts to evolving threats, transforming raw data into actionable intelligence and supporting investigators with network‑aware insights and GenAI‑assisted dashboards.

Strategic, AI‑enabled defense

An enterprise‑ready fraud detection capability blends multidimensional anomaly sensing, supervised rule discovery, and operational thresholds into a unified pipeline. By using wide‑ranging transaction and profile inputs, the model surfaces actionable signals, then codifies them through rules and coefficients for system deployment. Applications across theft, laundering, forgery, and phishing align with compliance objectives and investigative priorities, while hybrid and graph‑based architectures strengthen detection of collusion and synthetic identities.

Integrated dashboards and GenAI assistants support investigators with context and responsiveness. With scalable monitoring and real‑time flagging, institutions reduce losses, protect trust, and adapt to rapidly evolving techniques, anchoring fraud controls as a strategic, AI‑enabled defense.