Highlights

- Many GenAI pilots stall in production because of limitations in underlying data platforms, not the AI models themselves.

- Data platforms have become strategic assets shaping how quickly AI initiatives scale and how safely autonomous capabilities are introduced.

- Traditional platform evaluation methods — vendor feature matrices and analyst rankings — fall short in the GenAI era.

- TCS' six-pillar framework puts business needs at the forefront, emphasizing strategic alignment, operational suitability, and responsible scaling.

From GenAI promise to operational reality

As enterprises move beyond generative AI (GenAI) proofs of concept, many are discovering that the limiting factor is no longer model capability but the data platforms beneath them. Across industries, a familiar pattern emerges: pilots show promise but stall in production, costs spike unpredictably, and governance concerns surface only after systems are live. Platforms built for analytics are being pushed to support continuous, agent-driven decisioning, typically without the controls and observability that production demands.

Data platforms, once treated as largely invisible infrastructure, have become a strategic lever that directly influences an organisation’s AI trajectory. They shape how quickly AI initiatives can scale, how safely autonomous behaviour can be introduced, and how predictably costs and risks can be managed. Platform decisions made years ago are determining whether GenAI ambitions accelerate or stall.

Yet traditional evaluations are increasingly falling short of what enterprises need in the GenAI era. Organisations require a structured, context-anchored approach to platform evaluation. This paper introduces a business-first, six-pillar evaluation approach designed to assess platforms based on strategic alignment, operational fit, and the ability to scale GenAI and agentic capabilities responsibly over time.

Why traditional platform selection approaches are breaking down

Modern data platforms have steadily shifted from operational infrastructure to strategic enablers of growth, efficiency, and risk management. Their impact is now evident across sectors:

- Telecommunications: AI-enabled analytics and decisioning are increasingly central to improving customer retention, network performance, and fraud detection.

- Media and streaming: Advanced personalisation and content intelligence have become key drivers of growth, engagement, and competitive differentiation.

- Information services: Data productisation and API-driven decisioning are creating new commercial models, enabling faster, more scalable value delivery to customers.

Yet despite this evolution and growing importance, many enterprises still rely on familiar tools to evaluate data platforms: vendor feature matrices, analyst rankings, or polished demonstrations. These inputs can be useful, but they increasingly fail to capture what matters most in practice.

Feature parity is rising across the market. Capabilities that once differentiated platforms, such as semantic search, model integration, and vector support, are rapidly becoming baseline. Still, organisations experience very different outcomes after selecting platforms that look comparable on paper.

The gap lies in what traditional evaluations overlook:

- How platforms behave under real workloads and real governance constraints

- Whether operating models and skills align with platform assumptions

- How total cost changes over time as workloads, usage patterns, and governance maturity evolve

- The ability to orchestrate data residency, egress costs, ecosystem dependencies, and operational consistency across multi-cloud operating environments

This is where many organisations incur platform debt, or the accumulated cost of choosing platforms that are ‘good enough’ for today’s use cases but structurally misaligned with tomorrow’s AI demands. Platform debt shows up as rigid data models, limited support for unstructured data, weak observability, or governance that cannot keep pace with autonomous systems.

Start with business context and operational fit

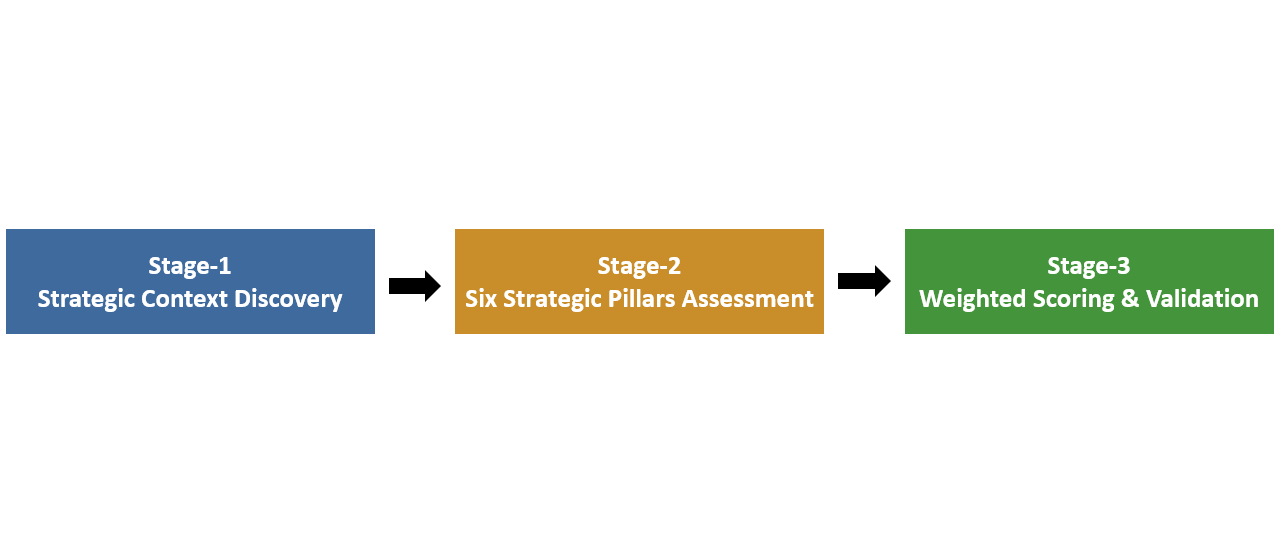

Against this backdrop, a business-first evaluation framework helps cut through feature noise and focuses on fit. The three-stage framework below aligns strategy, required capabilities, and platform choice.

Stage 1: Strategic context discovery

Organisations that make effective platform decisions begin with business context and operational reality rather than technology comparisons. Instead of asking, ‘Which platform has the most features?’ they ask, ‘Which platform best fits how we operate and where we are going?’

This starts by clarifying a small number of priority outcomes, such as:

- AI-enabled revenue growth and new data products

- Operational efficiency through automation and decisioning

- Improved customer and employee experience

- Stronger compliance, governance, and trust

Equally important is understanding dominant use-case patterns. Telecommunications providers optimizing real-time networks face different requirements than media companies driving personalisation or information services firms delivering decision APIs at scale. These patterns influence latency needs, data variety, and governance depth.

Operational fit also matters. Existing cloud commitments, regulatory exposure, skills availability, and financial constraints shape what success looks like. A platform that excels in theory but clashes with organisational reality often becomes a long-term liability.

Finally, translating business objectives into platform decisions requires an explicit bridge between intent and execution. A clear strategy-to-architecture brief defines required data and AI capabilities, success metrics, and platform characteristics, and makes two items explicit: a decision rights matrix that clarifies where AI agents can act autonomously versus where human approval is required, and a risk register that outlines sensitive data domains and acceptable action boundaries.

Embedding responsible AI as an enabler, not a constraint

Early adopters are learning that governance cannot be postponed. As agentic systems move closer to production, the absence of baseline controls quickly halts progress. Establishing minimum viable controls does not slow innovation. It enables it by making scale trustworthy.

Minimum viable GenAI controls include:

- Policy as code for access and safety

- End-to-end trace and action logs

- Automated evaluations for factuality and safety

- Red team and rollback playbooks

- Human-in-the-loop oversight for material decisions

Stage 2: Six strategic pillars assessment

Once business context is established, platforms should be evaluated against six strategic pillars that map to business outcomes, with weightings adjusted based on organisational priorities and operating context. Together, these pillars provide a practical lens for assessing not just technical capability, but long-term fit and readiness in the GenAI era.

Pillar 1: Strategic alignment and ecosystem fit (15–25%) determines how quickly value can be realised. Platforms that integrate naturally with identity services, landing zones, analytics tools, and partner ecosystems reduce friction and accelerate adoption.

Pillar 2: A resilient data management foundation (20–30%) remains essential. Platforms must support structured, unstructured, and streaming data at the required scale and latency, recognising that different industries place very different demands on data architectures (for example, telecom telemetry at scale versus media content metadata and personalisation signals).

Pillar 3: Analytics, insights, and user experience (15–25%) evaluates how effectively the platform enables productivity across data scientists, analysts, and business users, balancing advanced engineering capabilities with accessibility for SQL‑ and BI‑driven teams.

Pillar 4: Architecture, technology, and security (15–25%) covers scalability, performance, lineage, policy enforcement, encryption, and certifications. Governance maturity, especially lineage depth and policy-as-code coverage, often differentiates platforms more than baseline security features.

Pillar 5: Cost optimisation and delivery (10–20%) requires a realistic multi-year view of total cost of ownership across compute, storage, networking, operations, and enablement, including the trade-offs between flexible consumption models and predictable capacity-based approaches.

Pillar 6: Future readiness for GenAI and agentic enablement (20–30%) evaluates readiness to support production-grade AI workloads, including advanced retrieval, agentic orchestration, observability of prompts and actions, and governance mechanisms that ensure AI operates safely, transparently, and at scale.

Stage 3: Weighted scoring and validation

Even the most thoughtful framework remains theoretical until tested. With priorities defined, platforms should be scored using weighted criteria aligned to business objectives rather than treated as equals. Initial rankings provide directional insight, but final decisions should be validated through focused proofs of concept grounded in priority use cases and realistic workload patterns, ensuring the platform performs as expected under real operational, governance, and cost conditions.

Platform choice as a strategic commitment

The most important shift in thinking is recognising that platform selection is not a reversible, short-term decision. It is a strategic commitment that shapes an organisation’s AI capabilities for years to come.

The real risk is not choosing the wrong platform. It is choosing one that is good enough today but unfit for tomorrow.

Organisations that succeed treat platform decisions as long-term enablers. They pair selection with disciplined validation, skills investment, and governance foundations, and continuously optimise against business outcomes.

By anchoring decisions in business context, prioritising operational fit, and designing for agentic AI from the outset, enterprises can reduce platform debt and build data foundations that scale responsibly into the next phase of GenAI.